Data Rich, Insight Poor

Compensation teams sit on some of the richest data in their organization—pay history, market benchmarks, equity schedules, performance ratings, organizational hierarchies—and yet most spend their days buried in reactive work. Fielding manager questions about offer ranges. Manually reconciling survey data across spreadsheets. Pulling together board-ready analyses that take days to assemble and minutes to present.

The pressure to change this is no longer theoretical. According to Pave's AI Pulse Survey, over half of compensation teams now face moderate to extreme pressure from leadership to adopt AI tools. And Gartner estimates that by 2030, roughly half of today's HR activities will be automated or performed by AI agents, fundamentally reshaping roles and workflows across the function.

Yet despite that pressure, only 16% of compensation professionals report using compensation-specific AI tools. The vast majority (84%) are using general-purpose writing tools like ChatGPT and Claude. They're drafting emails and summarizing documents, not transforming how pay decisions get made.

The gap between leadership expectations and on-the-ground reality isn't a technology problem. It's a trust problem. And AI agents—a new category distinct from the chatbots and copilots most teams have experimented with—may be the key to closing it. Not by removing humans from the loop, but by giving every compensation professional the analytical firepower of a much larger team.

The question isn't "Should we use AI in comp?" It's "Where does AI add leverage without adding risk?"

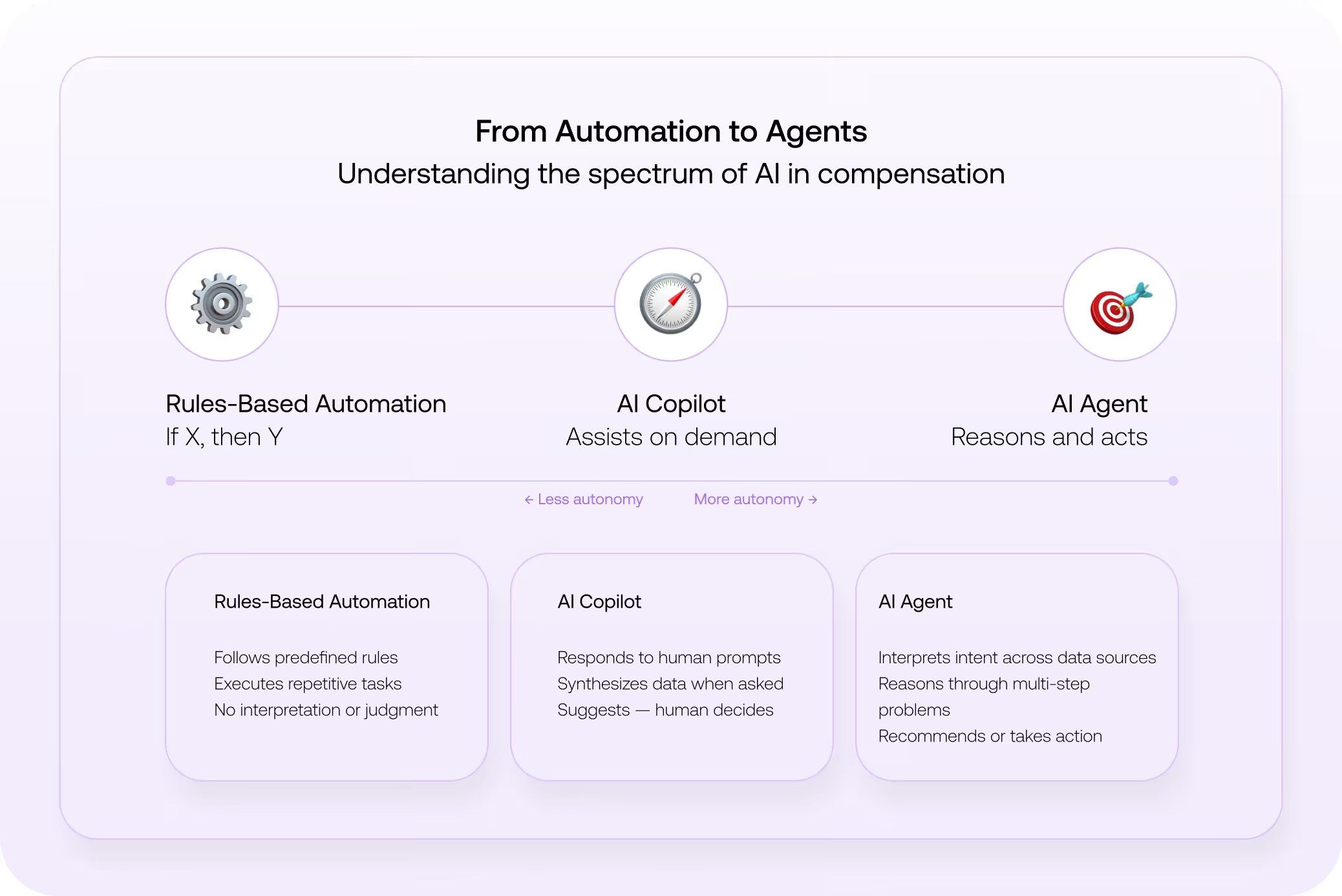

From Automation to Agents: What's Actually Different

Before diving into use cases, it's worth getting precise about terminology, because the market is flooded in "AI-washing," and compensation leaders can't afford to confuse categories.

The key distinction is this: where a copilot helps you draft the email explaining a pay decision, an agent can pull benchmark data, compare it against internal equity, flag compression risk, and then help you draft the communication, all in one interaction.

This distinction matters for compensation because the function has lagged behind recruiting and other HR domains in AI adoption for understandable reasons. Pay decisions carry a higher compliance risk, greater executive visibility, and touch every employee directly. The data environments are messier, a patchwork of HRIS exports, survey cuts, and internal spreadsheets. Generic AI tools, no matter how capable, struggle without the deep compensation context that a compensation analyst has.

That's precisely why purpose-built agents, tools trained on compensation logic, connected to real market data, and designed to operate within governance guardrails, represent a fundamentally different value proposition than asking a general-purpose LLM to help with your merit cycle.

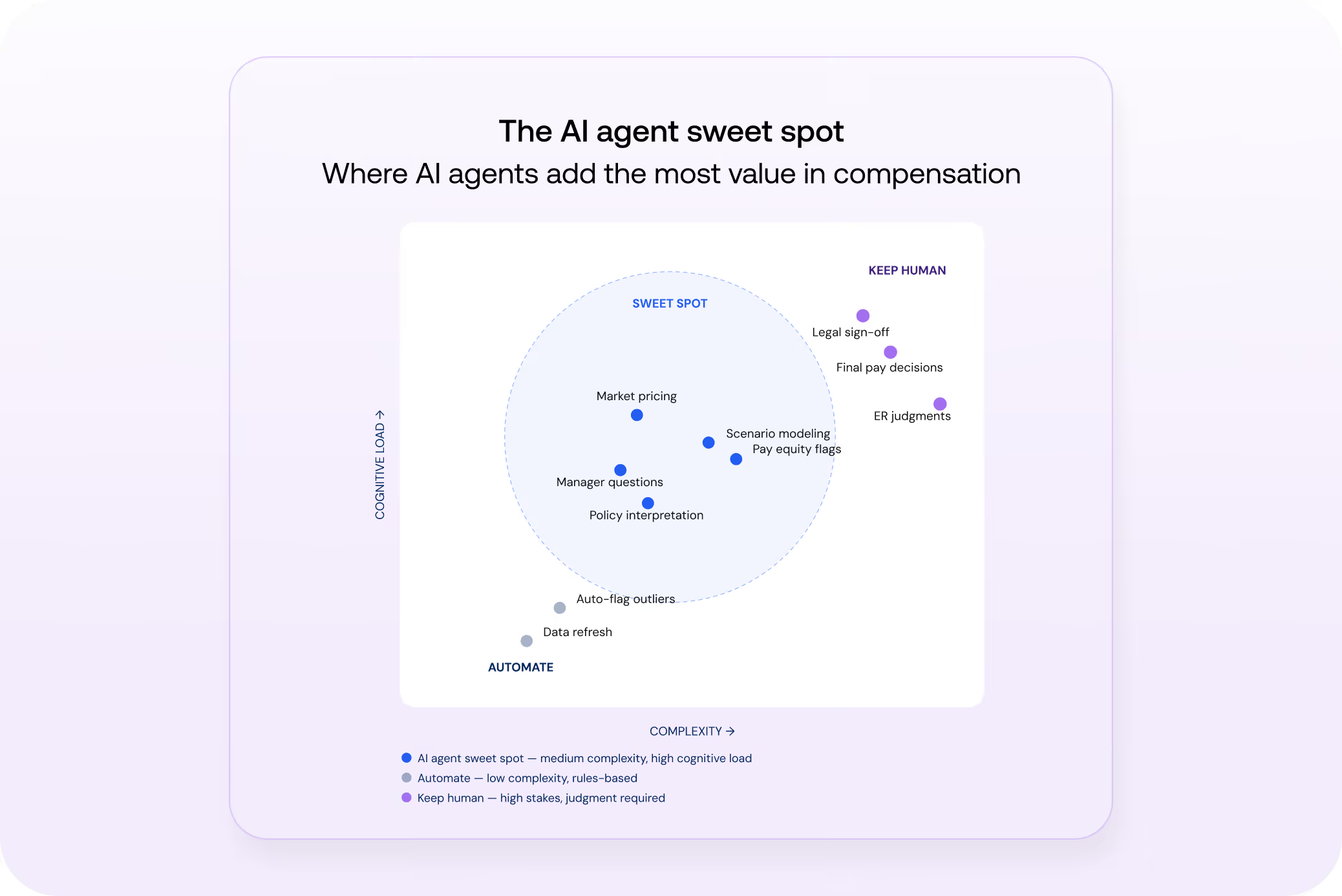

The "Sweet Spot": Where AI Agents Earn Their Keep

Not every task in compensation is a good fit for AI agents. The best use cases sit at the intersection of medium complexity, high cognitive load, and low tolerance for manual error.

The right side of the chart matters just as much as the center. Final pay decisions, sensitive employee relations judgments, and legal sign-offs should stay human. The framing is advisory, not autonomous: better judgment at scale, not judgment-free compensation.

Here's where the sweet spot shows up in practice:

Market Pricing and Benchmark Interpretation

This is the single most common request that buries comp teams. When a VP asks what a Staff Engineer should be paid in Austin versus New York, doing it properly takes 45 minutes to pull benchmarks, adjust for geography, and contextualize within your pay philosophy. An AI agent can do it in moments.

Scenario Modeling During Comp Cycles

Merit and promotion cycles are high-stakes and time-compressed. Leaders need to model budget allocation scenarios, understand equity implications, and identify outliers under deadline pressure. AI agents can simulate these dynamically, surfacing cost impact, compression risks, and pay equity concerns that would take hours to model manually.

Ad Hoc Executive and Manager Questions

"Are we competitive for this role?" "What would it cost to bring our engineering team to the 75th percentile?" Each one pulls you into a research cycle. An agent connected to your live market data can field these directly or draft the answer for your review, collapsing the time between question and insight.

Where Agents Should Not Go (Yet)

The Pave survey found that nearly 60% of compensation leaders are skeptical about fully automating pay decisions. As of today, most agree that AI agents should not make final pay decisions for individual employees, handle sensitive employee-relations, or provide legal or compliance sign-off.

Other findings back this up. Gartner predicts that over 40% of agentic AI projects will be canceled by the end of 2027, driven by escalating costs, unclear business value, or inadequate risk controls. The organizations most at risk are those that skip governance in favor of speed. For compensation teams, the lesson is clear: starting with advisory use cases isn't timid, but a strategic decision to survive the hype cycle.

The Trust Equation: Why Governance Is the Real Product

For compensation leaders, the trust question is the whole ballgame. Pave's survey puts accuracy of recommendations as the top concern (68%), followed by data security (64%) and the ability to understand company-specific context (63%).

These aren't irrational anxieties—compensation decisions affect livelihoods, create legal exposure, and shape perceptions of fairness. A wrong recommendation that gets implemented does real damage.

This is why the governance model around an AI agent matters more than the AI itself. Any agent worth considering should have:

- Human-in-the-loop controls. Every recommendation should be reviewable, with review as the default.

- Explainability of outputs. When an agent recommends a pay range or flags compression, you need to see why—what data sources, what assumptions, what confidence level.

- Audit trails. Regulators and stakeholders increasingly expect documentation of how pay decisions were informed. Agents should generate a reviewable record of the data, logic, and recommendations they provided.

The Pave survey reinforces where the industry stands: 54% of organizations are considering AI for individual comp decisions but haven't implemented it, and another 21% have legal concerns. Only 6% are actively piloting. The right response isn't to wait indefinitely, but to start with tools designed from the ground up for this level of scrutiny.

As more vendors enter the comp-specific AI space claiming autonomous capabilities, the distinction between 'I recommend' and 'I've decided' is becoming the defining line buyers use to evaluate risk.

Tips to Approach Getting Started

If you're convinced that AI agents have a role in your compensation function but aren't sure where to begin, the following approach can help you build confidence without overcommitting.

Start with advisory use cases, not execution. Begin with tasks in which an agent provides recommendations for a human to review and act on. Market pricing inquiries, benchmark comparisons, and data summarization are low-risk, high-value starting points. These are also the areas where adoption momentum is strongest: job matching (45%), job architecture and leveling (41%), and analysis of external data (41%), all of which rank in the top tier of current or planned AI use cases.

Don't try to transform your entire function at once. Identify the single workflow that consumes the most time relative to its strategic value, and start there. For many teams, that's fielding ad hoc pricing requests. For others, it's the manual preparation work before a merit cycle.

Prove value, then expand. Define success metrics upfront: time saved per request, reductions in escalations, and the team's confidence in the outputs. Avoid the temptation to measure only efficiency. Trust and quality matter just as much in early adoption. Once your team has validated accuracy and reliability on a contained use case, you earn the right to expand scope, from market pricing to scenario modeling, from ad hoc queries to proactive anomaly detection. Each expansion should be deliberate and measured.

Choosing the Right Tools: Generic vs. Purpose-Built

Over half of compensation leaders now rate AI capabilities as significantly or extremely important when selecting technology vendors—AI features are moving from "nice to have" to evaluation criteria. But general-purpose AI models, while impressive at language tasks, lack what compensation work demands: access to real-time market data, understanding of job architectures, knowledge of pay philosophy concepts, and the ability to reason about internal equity alongside external benchmarks.

Purpose-built compensation agents close these gaps. When evaluating vendors, push beyond the marketing language. Ask to see how a recommendation is generated. Ask what data the model draws on and how up-to-date it is. Ask about bias testing and explainability. Ask whether the tool is truly an agent—capable of reasoning across data sources and maintaining context—or a chatbot with a new label.

Gartner has explicitly cautioned against "agentwashing," in which vendors rebrand basic chatbots as agents by claiming autonomy or integration to justify the term. But a newer version of this problem is emerging: vendors that swing the other direction, marketing 'autonomous' compensation agents that automate planning and analysis and generate manager narratives without the advisory guardrails that comp decisions require.

The Shift From Reactive to Strategic

The real promise of AI agents in compensation isn't doing the same work faster. It's changing what compensation teams spend their time on.

When an agent handles the first draft of a market analysis, fields routine manager questions, and flags anomalies before you have to go looking for them, the comp team's time shifts from answering questions to shaping strategy. This isn't about needing fewer compensation professionals. It's about getting more strategic leverage from every expert on the team.

The organizations that win won't be the ones that adopt AI agents fastest. They'll be the ones that adopt them most responsibly, building trust with every interaction, maintaining human accountability for every decision, and using the time they reclaim to do the kind of strategic work that no AI can replicate.

Ready to see what a compensation-specific AI agent looks like in practice? Explore Paige, Pave's AI compensation analyst, or take the AI Maturity Self-Assessment to benchmark where your team stands today.

Charles is a member of Pave's marketing team, bringing nearly 20 years of experience in HR strategy and technology. Prior to Pave, he advised CHROs and other HR leaders at CEB (now Gartner's HR Practice), supported benefits research initiatives at Scoop Technologies, and, most recently, led SoFi's employee benefits business, SoFi at Work. A passionate advocate for talent innovation, Charles is known for championing data-driven HR solutions.

.avif)